生物多样性 ›› 2025, Vol. 33 ›› Issue (12): 25283. DOI: 10.17520/biods.2025283 cstr: 32101.14.biods.2025283

安家宁1,2,3, 张长春1,2,3( ), 王建涛5, 裴志永6, 白丹丹7, 张军国1,2,4,*(

), 王建涛5, 裴志永6, 白丹丹7, 张军国1,2,4,*( )

)

收稿日期:2025-07-20

接受日期:2025-08-28

出版日期:2025-12-20

发布日期:2026-01-09

通讯作者:

* zhangjunguo@bjfu.edu.cn

基金资助:

Jianing An1,2,3, Changchun Zhang1,2,3( ), Jiantao Wang5, Zhiyong Pei6, Dandan Bai7, Junguo Zhang1,2,4,*(

), Jiantao Wang5, Zhiyong Pei6, Dandan Bai7, Junguo Zhang1,2,4,*( )

)

Received:2025-07-20

Accepted:2025-08-28

Online:2025-12-20

Published:2026-01-09

Supported by:摘要:

野生动物是生态系统的重要组成部分, 高效的图像识别与监测对其保护具有重要意义。在野生动物图像识别的实际应用中, 由于环境背景复杂所引发的跨域分布差异, 以及目标域中未知物种的干扰, 常常导致模型泛化性能的降低。针对上述挑战, 本文提出一种融合对抗解耦与特征对齐的野生动物图像开集域适应方法。首先, 基于残差网络ResNet50构建域对抗网络, 再采用融合中心对齐与正交投影的双重优化策略, 通过增强已知类别的判别性进一步解耦未知类别的特征空间, 最后构建融合对抗解耦与特征对齐的野生动物开集域适应识别模型。实验结果表明, 所提出的方法在包含8类与11类野生动物的域适应数据集上进行训练与评估, 分别获得了48.95%和46.38%的Average-HOS值, 与最佳对比方法相比, Average-HOS值分别提升了10.90%和5.37%。与基线模型相比, 所提方法在开集域适应任务中展现出显著的性能优势。本文提出的融合对抗解耦与特征对齐的协同优化方法, 能有效解决野生动物识别任务中域偏移与未知类别干扰难题, 进而提升模型在开放场景下的跨域泛化及未知类别识别能力。

安家宁, 张长春, 王建涛, 裴志永, 白丹丹, 张军国 (2025) 融合对抗解耦与特征对齐的野生动物图像开集域适应方法. 生物多样性, 33, 25283. DOI: 10.17520/biods.2025283.

Jianing An, Changchun Zhang, Jiantao Wang, Zhiyong Pei, Dandan Bai, Junguo Zhang (2025) An open-set domain adaptation method for wildlife image recognition via adversarial disentanglement and feature alignment. Biodiversity Science, 33, 25283. DOI: 10.17520/biods.2025283.

| 数据集 Datasets | 野猪 Wild boar | 中华斑羚 Chinese goral | 马鹿 Wapiti | 猞猁 Eurasian lynx | 貉 Raccoon dog | 狗獾 Eurasian badger | 东北兔 Manchurian hare | 狍 Eastern roe deer |

|---|---|---|---|---|---|---|---|---|

| D | 911 | 305 | 315 | 79 | 193 | 214 | 25 | 168 |

| N | 1,449 | 758 | 229 | 136 | 560 | 429 | 401 | 25 |

表1 内蒙古乌兰坝国家级自然保护区野生动物图像数据集统计信息

Table 1 Statistical information of the wildlife image dataset from the Ulaanba National Nature Reserve, Inner Mongolia

| 数据集 Datasets | 野猪 Wild boar | 中华斑羚 Chinese goral | 马鹿 Wapiti | 猞猁 Eurasian lynx | 貉 Raccoon dog | 狗獾 Eurasian badger | 东北兔 Manchurian hare | 狍 Eastern roe deer |

|---|---|---|---|---|---|---|---|---|

| D | 911 | 305 | 315 | 79 | 193 | 214 | 25 | 168 |

| N | 1,449 | 758 | 229 | 136 | 560 | 429 | 401 | 25 |

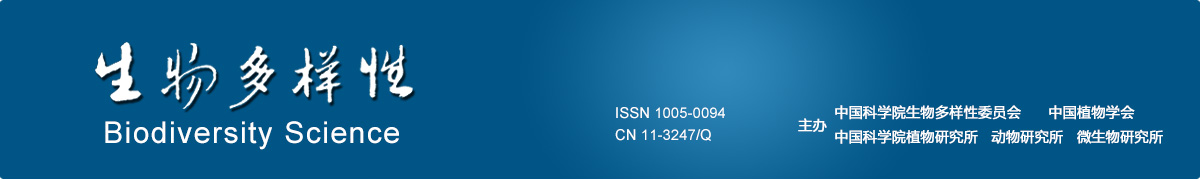

图1 DN数据集中子数据集D和N的特征分布t-SNE可视化。蓝色点表示源域样本, 橙色点表示目标域样本。

Fig. 1 t-SNE visualization of feature distributions for sub-datasets D and N in the DN Dataset. Blue dots represent source-domain samples, orange dots represent target-domain samples.

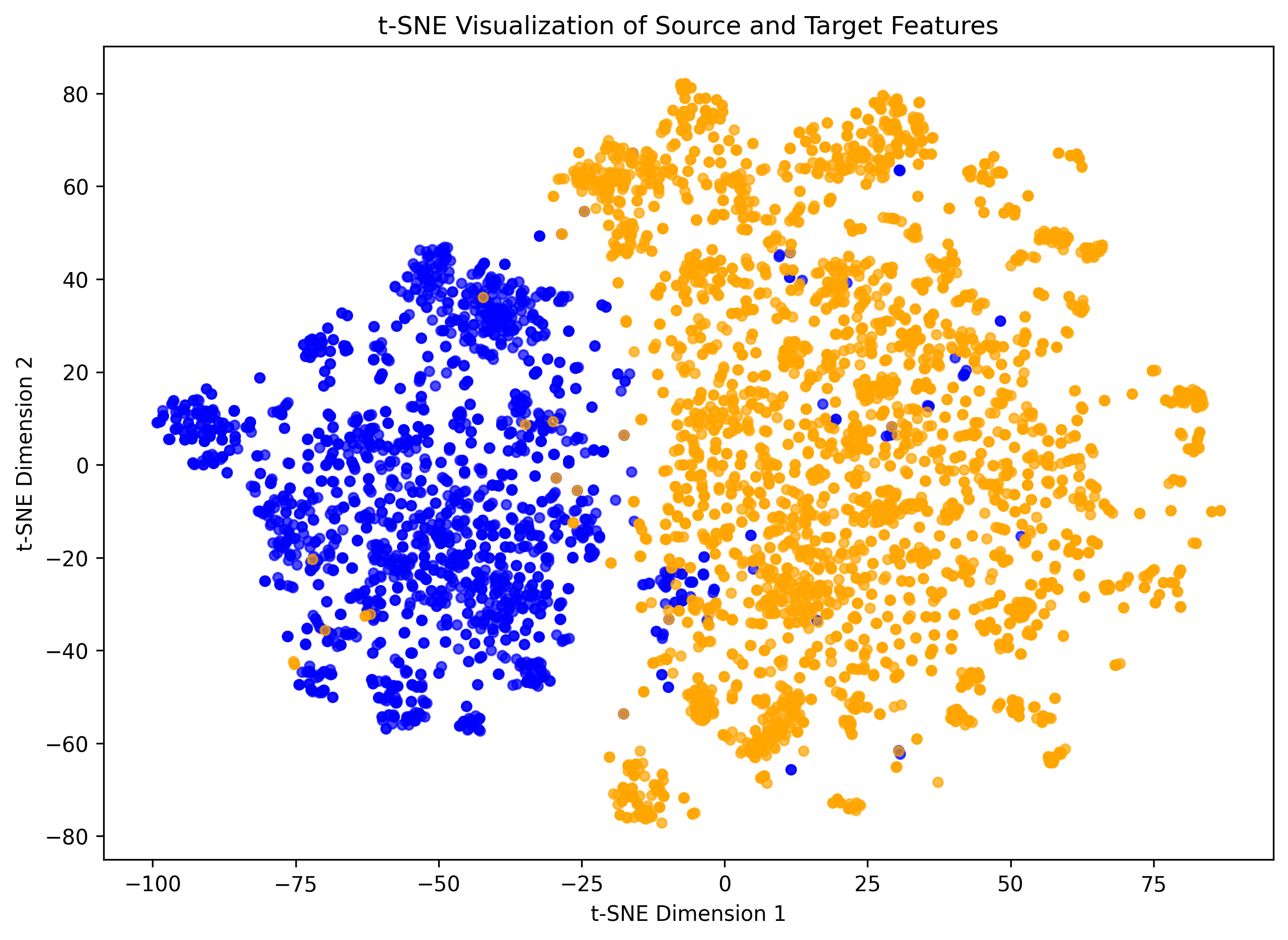

图2 DN数据集中子数据集D和N的细粒度类条件分布对比。不同颜色表示不同类别: 圆形表示源域样本, 三角形表示目标域样本。

Fig. 2 Fine-grained class-conditional distribution comparison from sub-datasets D and N in the DN Dataset. Different colors indicate different classes: circles represent source-domain samples, and triangles represent target-domain samples.

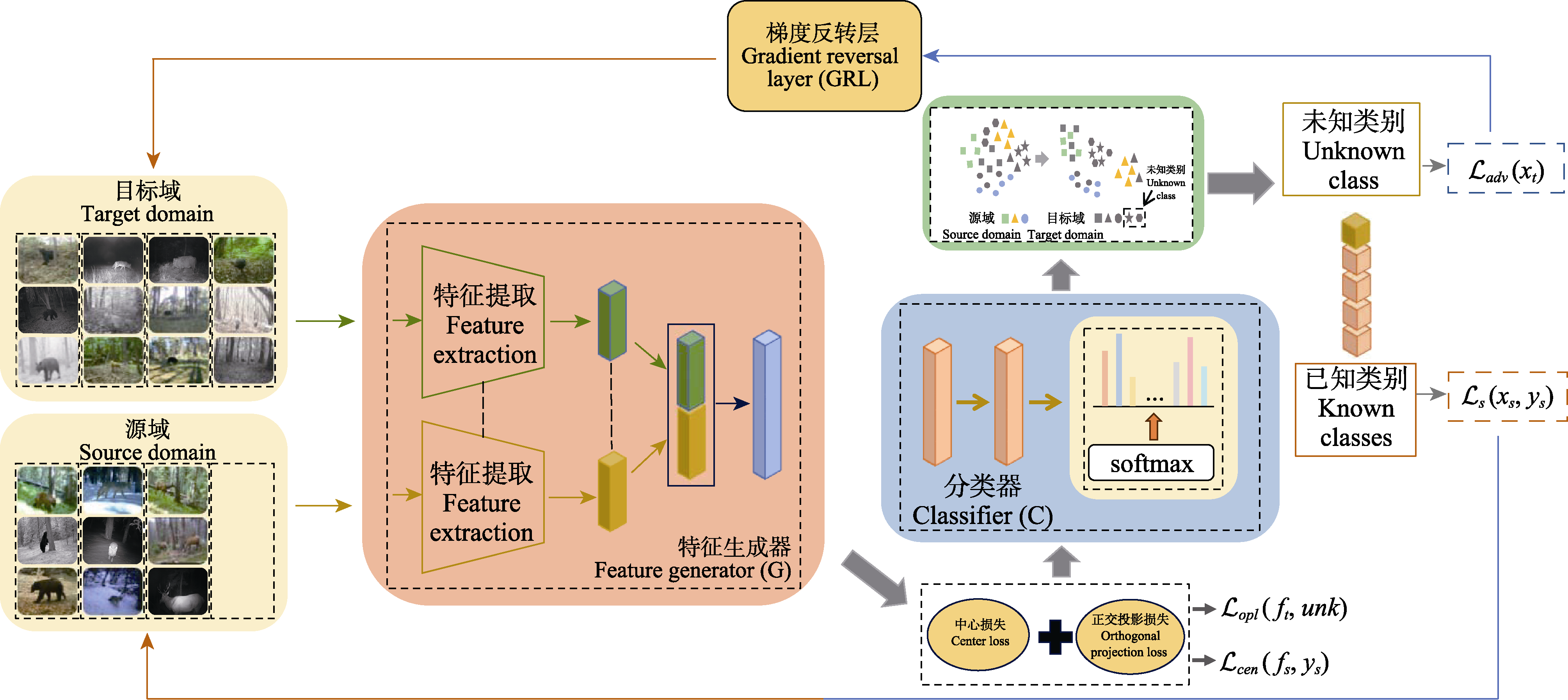

图5 融合对抗解耦与特征对齐的野生动物图像开集域适应网络结构

Fig. 5 Network architecture for open-set domain adaptation in wildlife imagery via adversarial disentanglement and feature alignment

| 模型 Model | 迁移任务 Transfer task D→N | 迁移任务 Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet50 | 28.00 | 25.07 | 51.51 | 33.72 | 29.15 | 25.29 | 52.33 | 34.10 | 33.91 |

| DANN | 33.55 | 30.85 | 49.78 | 38.09 | 34.87 | 33.31 | 44.24 | 38.00 | 38.05 |

| ROS | 28.91 | 20.87 | 77.13 | 32.85 | 31.91 | 30.86 | 38.20 | 34.14 | 33.50 |

| ROS* | 24.36 | 20.58 | 47.05 | 28.63 | 30.25 | 28.89 | 38.42 | 32.98 | 30.81 |

| MTS | 31.78 | 37.08 | 25.78 | 30.41 | 22.15 | 25.84 | 39.26 | 31.17 | 30.79 |

| 本文方法 Ours | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

表2 不同模型在DN数据集的实验结果对比

Table 2 Comparison of experimental results of different models on the DN wildlife dataset

| 模型 Model | 迁移任务 Transfer task D→N | 迁移任务 Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet50 | 28.00 | 25.07 | 51.51 | 33.72 | 29.15 | 25.29 | 52.33 | 34.10 | 33.91 |

| DANN | 33.55 | 30.85 | 49.78 | 38.09 | 34.87 | 33.31 | 44.24 | 38.00 | 38.05 |

| ROS | 28.91 | 20.87 | 77.13 | 32.85 | 31.91 | 30.86 | 38.20 | 34.14 | 33.50 |

| ROS* | 24.36 | 20.58 | 47.05 | 28.63 | 30.25 | 28.89 | 38.42 | 32.98 | 30.81 |

| MTS | 31.78 | 37.08 | 25.78 | 30.41 | 22.15 | 25.84 | 39.26 | 31.17 | 30.79 |

| 本文方法 Ours | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

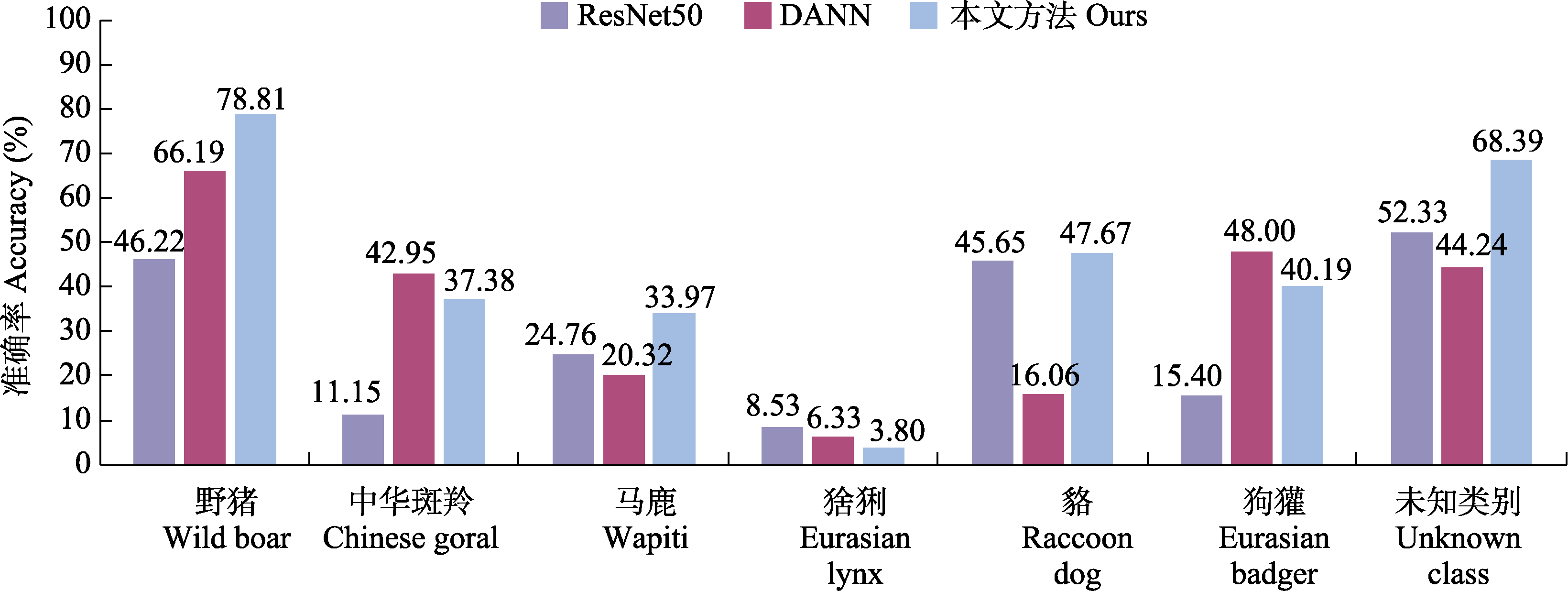

图6 N→D任务上单类物种识别准确率对比。ResNet50和DANN为对比方法, Ours为本文提出的方法。

Fig. 6 Per-class recognition accuracy comparison under the N→D task. ResNet50 and DANN are comparative methods, while Ours denotes the proposed approach.

| 模型 Model | 迁移任务 Transfer task S1→S2 | 迁移任务 Transfer task S2→S1 | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet50 | 28.00 | 25.07 | 51.51 | 33.72 | 37.91 | 37.25 | 43.15 | 39.99 | 36.85 |

| DANN | 32.58 | 30.22 | 51.40 | 38.06 | 39.52 | 37.92 | 52.27 | 43.96 | 41.01 |

| ROS | 23.58 | 18.39 | 65.10 | 28.68 | 23.94 | 19.37 | 60.51 | 29.34 | 29.01 |

| ROS* | 16.58 | 8.05 | 84.82 | 14.70 | 20.24 | 12.57 | 81.61 | 21.78 | 18.24 |

| MTS | 27.08 | 25.94 | 36.20 | 30.22 | 35.83 | 34.58 | 33.42 | 33.99 | 32.11 |

| 本文方法 Ours | 38.05 | 35.04 | 62.10 | 44.80 | 41.80 | 39.34 | 61.43 | 47.96 | 46.38 |

表3 不同模型在S1S2野生动物数据集上的实验结果

Table 3 Comparison of experimental results of different models on the S1S2 wildlife dataset

| 模型 Model | 迁移任务 Transfer task S1→S2 | 迁移任务 Transfer task S2→S1 | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet50 | 28.00 | 25.07 | 51.51 | 33.72 | 37.91 | 37.25 | 43.15 | 39.99 | 36.85 |

| DANN | 32.58 | 30.22 | 51.40 | 38.06 | 39.52 | 37.92 | 52.27 | 43.96 | 41.01 |

| ROS | 23.58 | 18.39 | 65.10 | 28.68 | 23.94 | 19.37 | 60.51 | 29.34 | 29.01 |

| ROS* | 16.58 | 8.05 | 84.82 | 14.70 | 20.24 | 12.57 | 81.61 | 21.78 | 18.24 |

| MTS | 27.08 | 25.94 | 36.20 | 30.22 | 35.83 | 34.58 | 33.42 | 33.99 | 32.11 |

| 本文方法 Ours | 38.05 | 35.04 | 62.10 | 44.80 | 41.80 | 39.34 | 61.43 | 47.96 | 46.38 |

| 网络 Network | 迁移任务 Transfer task D→N | 迁移任务Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet18 | 29.88 | 32.04 | 16.90 | 22.13 | 36.20 | 37.14 | 30.57 | 33.54 | 27.83 |

| ResNet34 | 36.19 | 32.05 | 61.03 | 42.03 | 37.41 | 38.46 | 31.09 | 34.38 | 38.21 |

| ResNet50 | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

| ResNet101 | 35.55 | 32.40 | 54.46 | 40.63 | 27.16 | 28.32 | 20.21 | 23.59 | 32.11 |

| ResNet152 | 33.65 | 35.04 | 25.35 | 29.42 | 42.26 | 38.94 | 62.18 | 47.89 | 38.65 |

| Wide_ResNet50_2 | 33.14 | 29.16 | 57.04 | 38.59 | 44.62 | 48.51 | 21.24 | 29.55 | 34.07 |

| ResNext50_32x4d | 34.95 | 31.23 | 57.28 | 40.42 | 26.61 | 27.50 | 21.24 | 23.97 | 32.19 |

表4 特征提取网络性能对比

Table 4 Comparison of performance of feature extraction network

| 网络 Network | 迁移任务 Transfer task D→N | 迁移任务Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||

| ResNet18 | 29.88 | 32.04 | 16.90 | 22.13 | 36.20 | 37.14 | 30.57 | 33.54 | 27.83 |

| ResNet34 | 36.19 | 32.05 | 61.03 | 42.03 | 37.41 | 38.46 | 31.09 | 34.38 | 38.21 |

| ResNet50 | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

| ResNet101 | 35.55 | 32.40 | 54.46 | 40.63 | 27.16 | 28.32 | 20.21 | 23.59 | 32.11 |

| ResNet152 | 33.65 | 35.04 | 25.35 | 29.42 | 42.26 | 38.94 | 62.18 | 47.89 | 38.65 |

| Wide_ResNet50_2 | 33.14 | 29.16 | 57.04 | 38.59 | 44.62 | 48.51 | 21.24 | 29.55 | 34.07 |

| ResNext50_32x4d | 34.95 | 31.23 | 57.28 | 40.42 | 26.61 | 27.50 | 21.24 | 23.97 | 32.19 |

| 对抗学习 Adversarial training | 正交投影损失Orthogonal projection loss | 中心损失 Center loss | 迁移任务 Transfer task D→N | 迁移任务 Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||||

| - | - | - | 28.00 | 25.07 | 51.51 | 33.72 | 29.15 | 25.29 | 52.33 | 34.10 | 33.91 |

| - | - | √ | 30.67 | 28.00 | 46.70 | 35.01 | 32.11 | 29.12 | 50.00 | 36.80 | 35.91 |

| - | √ | - | 31.00 | 28.27 | 47.36 | 35.40 | 26.53 | 20.61 | 62.04 | 30.94 | 33.17 |

| √ | - | - | 37.85 | 35.91 | 49.53 | 41.63 | 41.99 | 38.54 | 62.69 | 47.73 | 44.68 |

| - | √ | √ | 30.77 | 28.12 | 46.70 | 35.10 | 32.72 | 29.57 | 51.57 | 37.59 | 36.35 |

| √ | √ | - | 33.18 | 30.29 | 50.47 | 37.86 | 40.56 | 35.14 | 73.06 | 47.46 | 42.66 |

| √ | - | √ | 46.78 | 50.08 | 27.00 | 35.08 | 43.48 | 38.64 | 72.54 | 50.42 | 42.75 |

| √ | √ | √ | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

表5 模型的消融实验结果

Table 5 Ablation experimental results of model

| 对抗学习 Adversarial training | 正交投影损失Orthogonal projection loss | 中心损失 Center loss | 迁移任务 Transfer task D→N | 迁移任务 Transfer task N→D | Average-HOS (%) | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| OS (%) | OS* (%) | UNK (%) | HOS (%) | OS (%) | OS* (%) | UNK (%) | HOS (%) | ||||

| - | - | - | 28.00 | 25.07 | 51.51 | 33.72 | 29.15 | 25.29 | 52.33 | 34.10 | 33.91 |

| - | - | √ | 30.67 | 28.00 | 46.70 | 35.01 | 32.11 | 29.12 | 50.00 | 36.80 | 35.91 |

| - | √ | - | 31.00 | 28.27 | 47.36 | 35.40 | 26.53 | 20.61 | 62.04 | 30.94 | 33.17 |

| √ | - | - | 37.85 | 35.91 | 49.53 | 41.63 | 41.99 | 38.54 | 62.69 | 47.73 | 44.68 |

| - | √ | √ | 30.77 | 28.12 | 46.70 | 35.10 | 32.72 | 29.57 | 51.57 | 37.59 | 36.35 |

| √ | √ | - | 33.18 | 30.29 | 50.47 | 37.86 | 40.56 | 35.14 | 73.06 | 47.46 | 42.66 |

| √ | - | √ | 46.78 | 50.08 | 27.00 | 35.08 | 43.48 | 38.64 | 72.54 | 50.42 | 42.75 |

| √ | √ | √ | 43.21 | 41.21 | 55.16 | 47.18 | 44.32 | 40.30 | 68.39 | 50.72 | 48.95 |

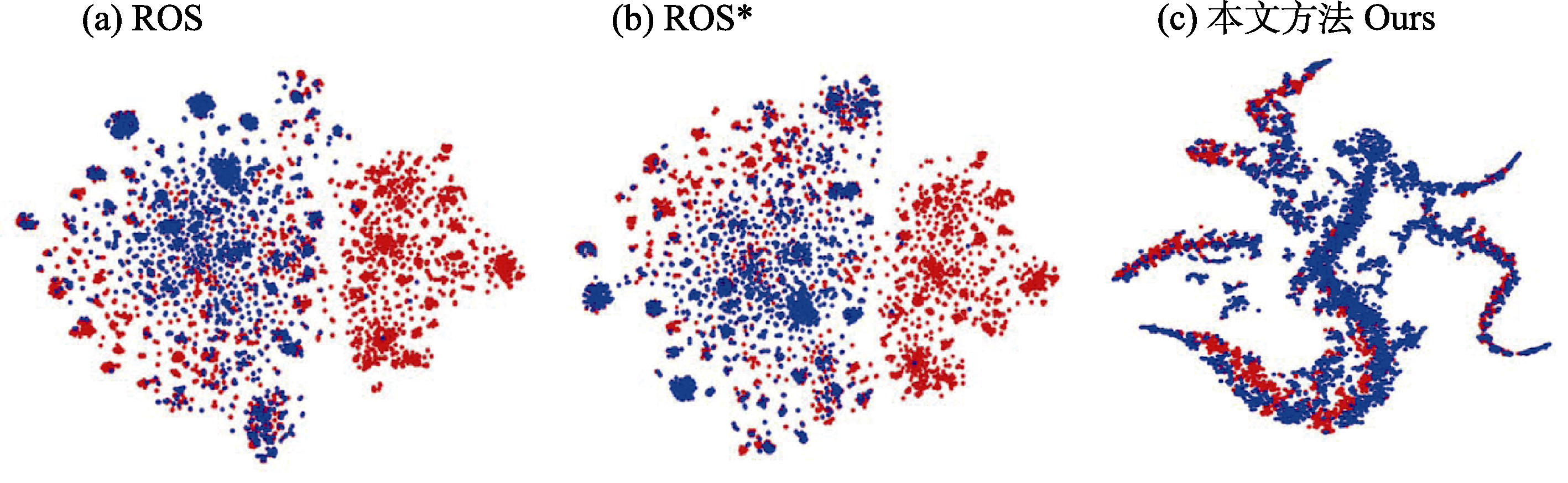

图8 特征分布的t-SNE嵌入可视化。ROS、ROS*为本文对比方法, ROS*为权重ωunk = 1的ROS方法。红色点表示源域的特征, 蓝色点表示目标域的特征。

Fig. 8 t-SNE embedded visualization of feature distributions. ROS and ROS* denote the comparative methods used in this study, where ROS* represents the weighted version of ROS. Red dots represent source-domain features, and blue dots represent target-domain features.

| [1] | Bai SH, Zhang M, Zhou WQ, Huang ST, Luan ZR, Wang DL, Chen BD (2024) Prompt-based distribution alignment for unsupervised domain adaptation. In: 2024 AAAI Conference on Artificial Intelligence, pp.729-737. AAAI Press, Vancouver. |

| [2] | Bucci S, Loghmani MR, Tommasi T (2020) On the effectiveness of image rotation for open set domain adaptation. In: 2020 European Conference on Computer Vision (ECCV), pp.422-438. Springer International Publishing, Glasgow. |

| [3] | Busto PP, Gall J (2017) Open set domain adaptation. In: 2017 IEEE International Conference on Computer Vision (ICCV), pp. 754-763. IEEE, Venice. |

| [4] |

Chang DL, Sain A, Ma ZY, Song YZ, Wang RP, Guo J (2024) Mind the gap: Open set domain adaptation via mutual-to-separate framework. IEEE Transactions on Circuits and Systems for Video Technology, 34, 4159-4174.

DOI URL |

| [5] | Ganin Y, Lempitsky V (2015) Unsupervised domain adaptation by backpropagation.In: 2015 IEEE International Conference on Machine Learning (ICML), pp. 1180-1189. IEEE, Lille. |

| [6] |

Gao F, Pi DC, Chen JF (2024) Balanced and robust unsupervised open set domain adaptation via joint adversarial alignment and unknown class isolation. Expert Systems with Applications, 238, 122127.

DOI URL |

| [7] | He KM, Zhang XY, Ren SQ, Sun J (2016) Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 770-778. IEEE, Las Vegas. |

| [8] | He ZW, Zhang ZL, Zhang L (2024) Survey on cross-domain object detection in open environment. Journal of Computer-Aided Design & Computer Graphics, 36, 485-502. (in Chinese with English abstract) |

| [何贞苇, 张治龙, 张磊 (2024) 开放环境下的跨域物体检测综述. 计算机辅助设计与图形学学报, 36, 485-502.] | |

| [9] | Jing TT, Liu HF, Ding ZM (2021) Towards novel target discovery through open-set domain adaptation. In: 2021 IEEE International Conference on Computer Vision (ICCV), pp. 9302-9311. IEEE, Montreal. |

| [10] | Liu H, Cao ZJ, Long MH, Wang JM, Yang Q (2019) Separate to adapt: Open set domain adaptation via progressive separation. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2922-2931. IEEE, Long Beach. |

| [11] |

Loghmani MR, Vincze M, Tommasi T (2020) Positive-unlabeled learning for open set domain adaptation. Pattern Recognition Letters, 136, 198-204.

DOI URL |

| [12] |

Long SF, Wang SS, Zhao X, Fu ZH, Wang BL (2023) Sample separation and domain alignment complementary learning mechanism for open set domain adaptation. Applied Intelligence, 53, 18790-18805.

DOI |

| [13] |

Lu JY, Shi XY, Duo LA, Wang TM, Li ZL (2024) Circadian rhythms of urban terrestrial mammals in Tianjin based on camera trapping method. Biodiversity Science, 32, 23369. (in Chinese with English abstract)

DOI |

|

[卢佳玉, 石小亿, 多立安, 王天明, 李治霖 (2024) 基于红外相机技术的天津城市地栖哺乳动物昼夜活动节律评价. 生物多样性, 32, 23369.]

DOI |

|

| [14] |

Ma ZB, Dong YQ, Xia Y, Xu DL, Xu F, Chen FX (2024) Wildlife real-time detection in complex forest scenes based on YOLOv5s deep learning network. Remote Sensing, 16, 1350.

DOI URL |

| [15] | Qu SQ, Zou TP, He LH, Röhrbein F, Knoll A, Chen G, Jiang CJ (2024) LEAD: Learning decomposition for source-free universal domain adaptation.In: 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 23334-23343. IEEE, Seattle. |

| [16] | Ranasinghe K, Naseer M, Hayat M, Khan S, Khan FS (2021) Orthogonal projection loss.In: 2021 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 12313-12323. IEEE, Montreal. |

| [17] |

Roy AM, Bhaduri J, Kumar T, Raj K (2023) WilDect-YOLO: An efficient and robust computer vision-based accurate object localization model for automated endangered wildlife detection. Ecological Informatics, 75, 101919.

DOI URL |

| [18] | Saito K, Yamamoto S, Ushiku Y, Harada T (2018) Open set domain adaptation by backpropagation. In: 2018 European Conference on Computer Vision (ECCV), pp.156-171. Springer International Publishing, Munich. |

| [19] | Tabak MA, Norouzzadeh MS, Wolfson DW, Sweeney SJ, Vercauteren KC, Snow NP, Halseth JM, Salvo PA, Lewis JS, White MD, Teton B, Beasley JC, Schlichting PE, Boughton RK, Wight B, Newkirk ES, Ivan JS, Odell EA, Brook RK, Lukacs PM, Moeller AK, Mandeville EG, Clune J, Miller RS (2019) Machine learning to classify animal species in camera trap images: Applications in Ecology.Methods in Ecology and Evolution, 10, 585-590. |

| [20] | Tan SH, Jiao JN, Zheng WS (2019) Weakly supervised open-set domain adaptation by dual-domain collaboration.In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 5389-5398. IEEE, Long Beach. |

| [21] |

Wang DW, Cheng S, Feng JW, Wang TM (2025) The wildlife camera-trapping dataset of Zhangguangcai Mountains in Northeast China (2015-2020). Biodiversity Science, 33, 24384. (in Chinese with English abstract)

DOI |

|

[王大伟, 程帅, 冯佳伟, 王天明 (2025) 东北地区张广才岭2015-2020年野生动物红外相机监测数据集. 生物多样性, 33, 24384.]

DOI |

|

| [22] | Wen YD, Zhang KP, Li ZF, Qiao Y (2016) A discriminative feature learning approach for deep face recognition. In: 2016 European Conference on Computer Vision (ECCV), pp. 499-515. Springer International Publishing, Amsterdam. |

| [23] |

Xiao ZS, Xiao WH, Wang TM, Li S, Lian XM, Song DZ, Deng XQ, Zhou QH (2022) Wildlife monitoring and research using camera-trapping technology across China: The current status and future issues. Biodiversity Science, 30, 22451. (in Chinese with English abstract)

DOI |

|

[肖治术, 肖文宏, 王天明, 李晟, 连新明, 宋大昭, 邓雪琴, 周岐海 (2022) 中国野生动物红外相机监测与研究: 现状及未来. 生物多样性, 30, 22451.]

DOI |

|

| [24] | Yousif H, Kays R, He Z (2019) Dynamic programming selection of object proposals for sequence-level animal species classification in the wild. IEEE Transactions on Circuits and Systems for Video Technology, 2, 28-29. |

| [25] |

Zhang CC, Zhang JG (2023) DJAN: Deep joint adaptation network for wildlife image recognition. Animals, 13, 3333.

DOI URL |

| [26] | Zhao ET, Zhang CC, Zhao HT, Zhang JG (2025) A recognition method of domain adaptation for wildlife images based on adversarial learning. Scientia Silvae Sinicae, 61(4), 1-8. (in Chinese with English abstract) |

| [赵恩庭, 张长春, 赵海涛, 张军国 (2025) 基于对抗学习的野生动物图像域适应识别方法. 林业科学, 61(4), 1-8.] | |

| [27] | Zheng F, Lü LX, Lü HX, Shi J, Li R, Yang SL, Liu BW (2025) The research on the mammal diversity and its dynamic based on camera-trapping in Heilongjiang Liangshui National Nature Reserve. Acta Ecologica Sinica, 45, 5289-5296. (in Chinese with English abstract) |

| [郑霏, 吕来新, 吕泓学, 师杰, 李瑞, 杨世丽, 刘丙万 (2025) 基于红外相机监测黑龙江凉水国家级自然保护区兽类多样性及其变化. 生态学报, 45, 5289-5296.] | |

| [28] |

Zheng JP, Wen YB, Chen MX, Yuan S, Li WJ, Zhao Y, Wu WZ, Zhang LX, Dong RM, Fu HH (2024) Open-set domain adaptation for scene classification using multi-adversarial learning. ISPRS Journal of Photogrammetry and Remote Sensing, 208, 245-260.

DOI URL |

| [29] |

Zhong L, Fang Z, Liu F, Yuan B, Zhang GQ, Lu J (2023) Bridging the theoretical bound and deep algorithms for open set domain adaptation. IEEE Transactions on Neural Networks and Learning Systems, 34, 3859-3873.

DOI URL |

| [1] | 纪林, 邓宸迅, 王丽凤, 王德港, 王建涛, 于永永, 张军国. 面向偏态分布的乌兰坝野生动物识别方法[J]. 生物多样性, 2026, 34(2): 25256-. |

| [2] | 田璐瑶, 尹豪. 基于生物多样性保护的我国生态铁路现状和对策研究[J]. 生物多样性, 2025, 33(8): 24495-. |

| [3] | 郑俊妮, 尚袁凌博, 罗堯, 魏营, 高志伟, 周宗泽, 廖凌娟, 杨道德. 地方重点保护野生动物名录调整方法探究: 以湖南省陆生脊椎动物为例[J]. 生物多样性, 2025, 33(8): 25055-. |

| [4] | 毛静, 王婧, 黄杰, 熊姝红, 张自亮, 张佑祥, 吴涛. 湖南高望界国家级自然保护区2021-2023年鸟兽多样性监测数据集[J]. 生物多样性, 2025, 33(6): 24489-. |

| [5] | 付梦娣, 朱彦鹏, 任月恒, 李爽, 秦乐, 谢正君, 王清春, 张立博. 新疆野生动物通道空间布局优化[J]. 生物多样性, 2025, 33(3): 24346-. |

| [6] | 王大伟, 程帅, 冯佳伟, 王天明. 东北地区张广才岭2015-2020年野生动物红外相机监测数据集[J]. 生物多样性, 2025, 33(2): 24384-. |

| [7] | 蒋承汛, 张塔星, 权子豪, 刘郢, 柴璐艳, 冉江洪. 青藏高原国家重点保护野生鸟类丰富度空间格局及热点区域[J]. 生物多样性, 2025, 33(11): 25171-. |

| [8] | 李佳琪, 冯一迪, 王蕾, 潘盆艳, 刘潇如, 李雪阳, 王怡涵, 王放. 上海城市环境中貉的食性分析及家域范围内的栖息地选择[J]. 生物多样性, 2024, 32(8): 24131-. |

| [9] | 巴苏艳, 赵春艳, 刘媛, 方强. 通过虫体花粉识别构建植物‒传粉者网络: 人工模型与AI模型高度一致[J]. 生物多样性, 2024, 32(6): 24088-. |

| [10] | 李柏灿, 张军国, 张长春, 王丽凤, 徐基良, 刘利. 基于TC-YOLO模型的北京珍稀鸟类识别方法[J]. 生物多样性, 2024, 32(5): 24056-. |

| [11] | 鲁彬悦, 李坤, 王晨溪, 李晟. 基于传感器标记的野生动物追踪技术在中国的应用现状与展望[J]. 生物多样性, 2024, 32(5): 23497-. |

| [12] | 秦涛, 崔荣赫, 宋蕊, 富丽莎. 我国野生动物肇事公众责任保险: 发展模式、现实困境与优化策略[J]. 生物多样性, 2024, 32(5): 23431-. |

| [13] | 朱建国, 王林, 任国鹏. 《国家重点保护野生动物名录》调整的评估方法探讨[J]. 生物多样性, 2023, 31(8): 23045-. |

| [14] | 陈金锋, 吴欣静, 林海, 崔国发. 《国家重点保护野生动物名录》和其他保护名录对比分析[J]. 生物多样性, 2023, 31(6): 22639-. |

| [15] | 李钊丞, 张燕雪丹. 基于物种濒危状况评价与种群增长的一种新评估方法在水生野生动物保护司法中的应用[J]. 生物多样性, 2023, 31(3): 22319-. |

| 阅读次数 | ||||||

|

全文 |

|

|||||

|

摘要 |

|

|||||

备案号:京ICP备16067583号-7

Copyright © 2026 版权所有 《生物多样性》编辑部

地址: 北京香山南辛村20号, 邮编:100093

电话: 010-62836137, 62836665 E-mail: biodiversity@ibcas.ac.cn

![]()